| Name: | Dave Zhenyu Chen |

|---|---|

| Position: | Ph.D Candidate |

| E-Mail: | zhenyu.chen@tum.de |

| Phone: | +49-89-289-17501 |

| Room No: | 02.07.037 |

Bio

I'm a Ph.D. candidate at TUM Visual Computing Group, advised by Prof. Dr. Matthias Niessner and Prof. Dr. Angel X. Chang. Previously, I received my Master's Degree in Informatics at Ludwig Maximilians University of Munich (LMU). Prior to this, I got my Bachelor's Degree in Computer Science at University of Electronic Science and Technology of China (UESTC). Homepage

Research Interest

3D computer vision, natural language processing, cross-modal deep learning, representation learningPublications

2024

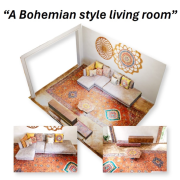

| SceneTex: High-Quality Texture Synthesis for Indoor Scenes via Diffusion Priors |

|---|

| Dave Zhenyu Chen, Haoxuan Li, Hsin-Ying Lee, Sergey Tulyakov, Matthias Nießner |

| CVPR 2024 |

| We propose SceneTex, a novel method for effectively generating high-quality and style-consistent textures for indoor scenes using depth-to-image diffusion priors. At its core, SceneTex proposes a multiresolution texture field to implicitly encode the mesh appearance. To further secure the style consistency across views, we introduce a cross-attention decoder to predict the RGB values by cross-attending to the pre-sampled reference locations in each instance. |

| [video][bibtex][project page] |

2023

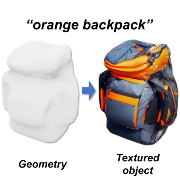

| Text2Tex: Text-driven Texture Synthesis via Diffusion Models |

|---|

| Dave Zhenyu Chen, Yawar Siddiqui, Hsin-Ying Lee, Sergey Tulyakov, Matthias Nießner |

| ICCV 2023 |

| We present Text2Tex, a novel method for generating high-quality textures for 3D meshes from the given text prompts. Our method incorporates inpainting into a pre-trained depth-aware image diffusion model to progressively synthesize high resolution partial textures from multiple viewpoints. Furthermore, we propose an automatic view sequence generation scheme to determine the next best view for updating the partial texture. |

| [video][bibtex][project page] |

| UniT3D: A Unified Transformer for 3D Dense Captioning and Visual Grounding |

|---|

| Dave Zhenyu Chen, Ronghang Hu, Xinlei Chen, Matthias Nießner, Angel X. Chang |

| ICCV 2023 |

| We propose UniT3D, a simple yet effective fully unified transformer-based architecture for jointly solving 3D visual grounding and dense captioning. UniT3D enables learning a strong multimodal representation across the two tasks through a supervised joint pre-training scheme with bidirectional and seq-to-seq objectives. |

| [bibtex][project page] |

2022

| D3Net: A Speaker-Listener Architecture for Semi-supervised Dense Captioning and Visual Grounding in RGB-D Scans |

|---|

| Dave Zhenyu Chen, Qirui Wu, Matthias Nießner, Angel X. Chang |

| ECCV 2022 |

| We present D3Net, an end-to-end neural speaker-listener architecture that can detect, describe and discriminate. Our D3Net unifies dense captioning and visual grounding in 3D in a self-critical manner. This self-critical property of D3Net also introduces discriminability during object caption generation and enables semi-supervised training on ScanNet data with partially annotated descriptions. |

| [video][bibtex][project page] |

2021

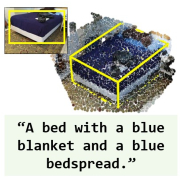

| Scan2Cap: Context-aware Dense Captioning in RGB-D Scans |

|---|

| Dave Zhenyu Chen, Ali Gholami, Matthias Nießner, Angel X. Chang |

| CVPR 2021 |

| We introduce the new task of dense captioning in RGB-D scans with a model that can densely localize objects in a 3D scene and describe them using natural language in a single forward pass. |

| [video][code][bibtex][project page] |

2020

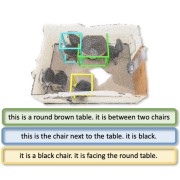

| ScanRefer: 3D Object Localization in RGB-D Scans using Natural Language |

|---|

| Dave Zhenyu Chen, Angel X. Chang, Matthias Nießner |

| ECCV 2020 |

| We propose ScanRefer, a method that learns a fused descriptor from 3D object proposals and encoded sentence embeddings, to address the newly introduced task of 3D object localization in RGB-D scans using natural language descriptions. Along with the method we release a large-scale dataset of 51,583 descriptions of 11,046 objects from 800 ScanNet scenes. |

| [video][code][bibtex][project page] |