Mask3D: Pre-training 2D Vision Transformers by Learning Masked 3D Priors

1Meta 2Technical University of Munich

Proc. Computer Vision and Pattern Recognition (CVPR), IEEE, June 2023

Abstract

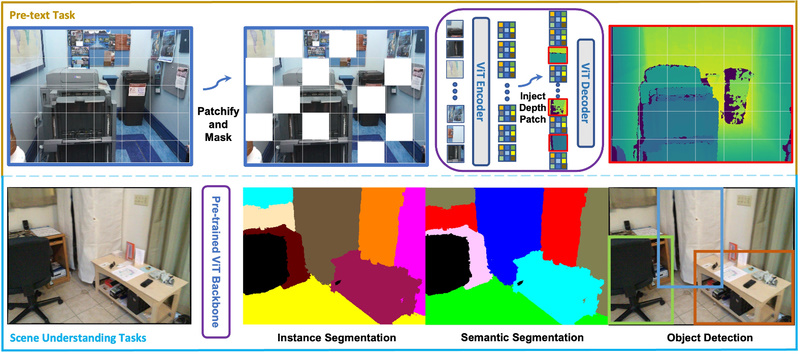

Current popular backbones in computer vision, such as Vision Transformers (ViT) and ResNets are trained to perceive the world from 2D images. However, to more effectively understand 3D structural priors in 2D backbones, we propose Mask3D to leverage existing large-scale RGB-D data in a self-supervised pre-training to embed these 3D priors into 2D learned feature representations. In contrast to traditional 3D contrastive learning paradigms requiring 3D reconstructions or multi-view correspondences, our approach is simple: we formulate a pre-text reconstruction task by masking RGB and depth patches in individual RGBD frames. We demonstrate the Mask3D is particularly effective in embedding 3D priors into the powerful 2D ViT backbone, enabling improved representation learning for various scene understanding tasks, such as semantic segmentation, instance segmentation and object detection. Experiments show that Mask3D notably outperforms state-of-the-art 3D pre-training on ScanNet, NYUv2, and Cityscapes image understanding tasks, with an improvement of +6.5% mIoU on ScanNet image semantic segmentation.

Bibtex

Bibtex