PlaneMatch: Patch Coplanarity Prediction for Robust RGB-D Reconstruction

1National University of Defense Technology (NUDT) 2Technical University of Munich 3Princeton University

Proceedings of the European Conference on Computer Vision (ECCV 2018)

Abstract

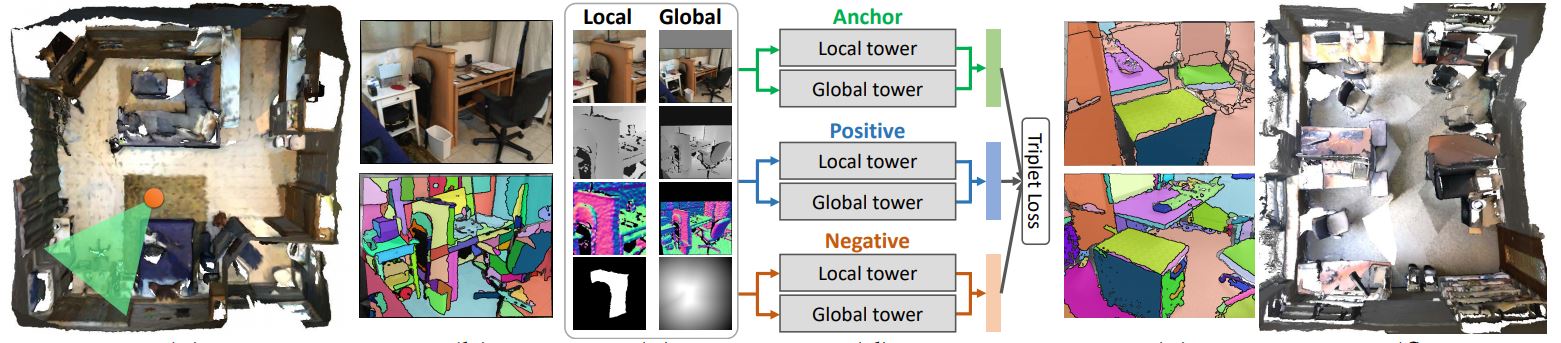

We introduce a novel RGB-D patch descriptor designed for detecting coplanar surfaces in SLAM reconstruction. The core of our method is a deep convolutional neural net that takes in RGB, depth, and normal information of a planar patch in an image and outputs a descriptor that can be used to find coplanar patches from other images.We train the network on 10 million triplets of coplanar and non-coplanar patches, and evaluate on a new coplanarity benchmark created from commodity RGB-D scans. Experiments show that our learned descriptor outperforms alternatives extended for this new task by a significant margin. In addition, we demonstrate the benefits of coplanarity matching in a robust RGBD reconstruction formulation.We find that coplanarity constraints detected with our method are sufficient to get reconstruction results comparable to state-of-the-art frameworks on most scenes, but outperform other methods on standard benchmarks when combined with a simple keypoint method.

Bibtex

Bibtex